FROM LOCAL HARDWARE TO GLOBAL SCALE

How Razer AVA Mini Was Powered by Razer AIKit and AkashML

Razer x Akash Network

30 April 2026

AVA Mini: Personalized AI at Campaign Scale

For April Fools’ 2026, Razer launched AVA Mini, an AI pet companion for Razer AVA, the flagship AI desktop companion. The experience went live at razer.com/razer-ava-mini where users could upload photos of their real pets and receive a unique, personalised AVA Mini character generated from their image. The experience ran live from 31 March through 4 April and was actively promoted across Razer’s channels.

On April 4th, Razer revealed AVA Mini as the centerpiece of their annual April Fools’ campaign — every generated AVA Mini was unique, a personalized artifact that transformed an April Fools concept into something users genuinely kept.

The Razer × Akash Partnership

Making that experience work at a campaign scale meant solving three problems at once: cost, reliability, and speed.

Generalist image-generation APIs charge between $0.03 and $0.15 per image depending on provider and model tier — a cost structure that makes a free, consumer-facing tool economically unviable on a meaningful scale. At the same time, a single local machine could not handle the traffic spikes generated by a coordinated campaign launch. Compounding this, the experience demanded near-instant response times, with latency measured in seconds rather than minutes.

The answer came from combining two technologies built for complementary problems. Razer AIKit — Razer’s open-source toolkit for running AI workloads on consumer and professional GPUs — handled the inference. AkashML, Akash Network’s managed inference service running on a decentralized GPU marketplace, handled the scale.

Together, they delivered personalized image generation at $0.01 per image, with an average end-to-end response time of 3.24 seconds including photo upload. The tool launched on March 31st at 8am PT, peaked on April 1st as planned, and ran through April 4th without a single manual intervention.

“The future of AI isn’t just better models – it’s efficient infrastructure. With Razer AIKit, many use cases already run locally. With Akash Network, we extend that into a decentralised cloud to scale efficiently.”

— Quyen Quach, VICE PRESIDENT, Software, Razer

HOW IT WORKS

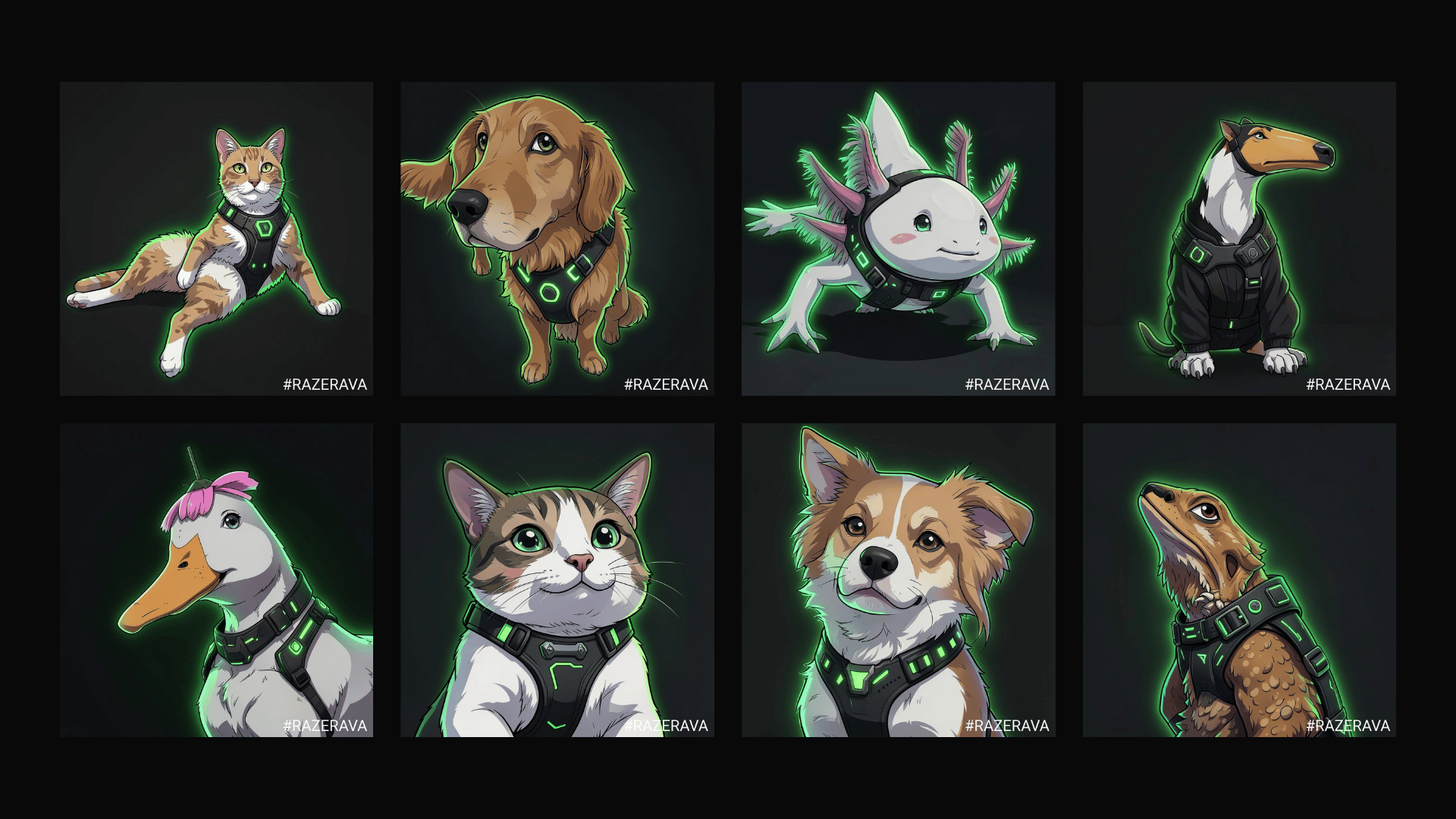

The request flow is straightforward:

Step 1: The user uploads a photo of their pet.

Step 2: The request is sent to a single OpenAI-compatible API endpoint managed by AkashML.

Step 3: AkashML receives the request and load-balances it across available resources.

Step 4: The request is distributed to a pool of Razer AIKit containers.

Step 5: These containers run on consumer-class RTX 5090 and RTX 4090 GPUs across the Akash Network.

Step 6: The system processes the image and generates the output.

Each container runs black-forest-labs/FLUX.2-klein-4B — a 4-billion parameter model from the Flux family by Black Forest Labs. It is chosen for its image quality, fast generation on consumer hardware, and ability to run entirely within the VRAM of a single RTX GPU. The generated AVA Mini is returned to the user in seconds.

From the user’s perspective, the interaction is a single web page. Under the hood, each request traverses a globally distributed pool of consumer GPUs, orchestrated as a single coherent inference service.

Razer’s Side: AIKit and vLLM-omnI

Razer AIKit is an open-source AI development toolkit released publicly in December 2025. The premise is direct: the NVIDIA GPUs installed in Razer gaming laptops, workstations, and custom builds are more than capable of running production AI workloads — they just need the right tooling around them.

AIKit ships as a Docker container that bundles everything needed out of the box:

- vLLM and vLLM-omni — high-throughput multimodal inference for both text and image generation

- Ray — distributed orchestration across multiple GPUs and nodes

- LLaMA-Factory — parameter-efficient fine-tuning

- Hugging Face integration as the default model source

A single command starts the container, with AI workloads running within minutes. Automatic GPU detection, dependency configuration, and serving-parameter optimization are handled out of the box — no cloud account required.

Razer has benchmarked what this means in practice: three RTX 5090s running AIKit deliver 385 tokens per second on the openai/gpt-oss-120b model — within striking distance of an NVIDIA H100 NVL’s 460 tokens per second, at a fraction of the cost and without the hourly cloud GPU rates. For image generation specifically, the FLUX.2-klein-base-4B model fits comfortably within a single RTX 5090’s VRAM envelope, making the per-GPU economics particularly strong.

For AVA Mini, the critical component inside AIKit was vLLM-omni — the multimodal inference engine responsible for every image generated during the campaign. Preparing it for the sustained concurrency of a consumer launch required close collaboration between the Razer and Akash engineering teams: a newer upstream vLLM-omni release was integrated into a rebuilt AIKit image, validated end-to-end, and verified in the AkashML production environment — all within a single day. Those improvements have since been contributed back to both the Razer AIKit repository and the upstream vLLM-omni project.

Akash’s Side: Making One API Out of Many GPUs

Akash Network

Akash Network is a decentralized compute marketplace. Providers — ranging from individual GPU owners to enterprise data centers — compete in a reverse-auction system for compute workloads. Deployments run via Kubernetes-native manifests written in Akash’s Software Definition Language (SDL). The model is permissionless, globally distributed, and accessible through Akash Console, Akash’s web-based deployment interface.

AkashML

AkashML is the managed inference layer built on top of Akash Network. Where Akash Network provides the raw compute layer — analogous to EC2 — AkashML is the managed inference platform above it. It runs a curated catalog of AI models on open-source inference stacks including vLLM and vLLM-omni, exposes a single OpenAI-compatible API endpoint, and handles load balancing, scaling, failover, and observability automatically. Developers direct their existing clients at AkashML without any changes to the underlying infrastructure.

Pooling AIKit Instances

For AVA Mini, Akash deployed Razer’s AIKit containers across a pool of RTX 4090 and RTX 5090 providers on the Akash marketplace and served a single AkashML-managed endpoint in front of all of them. Multiple AIKit instances — each running on an individual consumer GPU at a different Akash provider — were pooled behind one API. AkashML handled per-request load balancing, rate limiting (500 requests per minute, configurable), and graceful degradation under load.

This approach is what made the unit economics viable. Rather than paying hyperscale cloud rates for dedicated GPU instances, AkashML sourced compute from providers competing on price in real time across a decentralized marketplace.

The technical collaboration was a joint engineering effort throughout. Integrating AIKit into AkashML’s managed production environment — which operates at concurrency levels beyond what most local-first toolchains are designed for — required co-tuning the runtime stack for sustained high-concurrency traffic.

“We’re thrilled about leveraging Razer’s AIKit on Akash’s distributed compute network and seeing it in action during the April Fools’ campaign. The unit economics couldn’t work out better. I’m excited for collaborating further on Akash Homenode and deploying on Razer products to expand Akash’s compute landscape.“

— GREG OSURI, FOUNDER, AKASH NETWORK

The Numbers

Cost came in at $0.01 per image on AkashML — compared to $0.03–$0.15 on typical generalist inference APIs for equivalent Flux-family generation — a 3x to 15x cost reduction depending on the reference provider.

| Provider | Cost per Image | Notes |

|---|---|---|

| Typical generalist inference API | $0.03–$0.15 / image | Market range for equivalent Flux-family generation |

| AkashML + AIKit | $0.01 / image | FLUX.2-klein-base-4B on decentralized consumer GPUs |

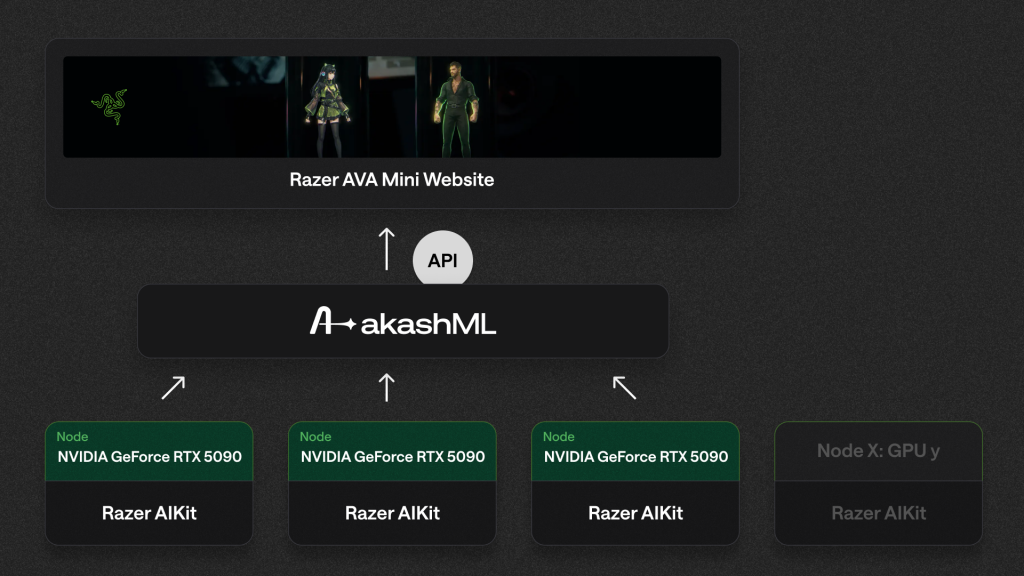

Speed averaged 3.24 seconds end-to-end across the campaign window — including upload and transfer of the user’s pet photo.

Throughput peaked at up to 30 images per minute across the Akash provider pool at peak load on April 1st.

Scale was handled automatically. As traffic climbed toward the April 1st peak, additional AIKit instances spun up across the Akash provider pool without manual intervention — no capacity ceilings reached, no on-call escalations required.

What Comes Next

Both Razer and Akash continue actively expanding the capabilities of this platform.

A key milestone in that expansion is already live: Razer AIKit is now also available on Akash Console, Akash Network’s web‑based interface for deploying and managing applications without the need for CLI tools. This provides developers and GPU operators with a more streamlined, browser-based way to deploy AIKit workloads across Akash’s decentralised compute marketplace, using the same deployment architecture that powered the AVA Mini campaign. No longer do builders have to choose between hyperscale pricing and hard scaling ceilings — a viable alternative exists that has been proven at scale, and it is now accessible without friction.

Akash Homenode opened beta sign-ups in Q1 2026, a program that lets individual owners of RTX 4090s, RTX 5090s, and RTX Pro 6000 Blackwell GPUs contribute their idle compute to the Akash marketplace. As Homenode grows, it brings a new tier of geographically distributed, consumer-class GPU supply into the AkashML provider pool, further improving economics and resilience.

The cost advantages demonstrated in the AVA Mini campaign are expected to improve further as that supply comes online.

Razer AIKit is expanding into multimodal output. Video and voice generation are the logical next extensions of this architecture. vLLM-omni already supports emerging audio workloads alongside image generation, and AkashML’s managed model catalog is expanding to cover these modalities. The same infrastructure that produced personalized AVA Mini characters from pet photos is architecturally ready for short-form video, voice synthesis, and richer generative media — served through the same consumer GPU pool and the same API surface.

Closing

The AVA Mini campaign validated its architecture in production. Razer AIKit and AkashML together demonstrate a deployment model that has long been treated as a thought experiment: a single container runtime that scales from a developer’s desk to a multi-GPU cluster to a globally distributed GPU marketplace, with no code changes and no operational surprises. For AI teams building consumer-facing experiences who face a choice between hyperscale pricing and hard scaling ceilings, this integration represents a viable third path — proven at scale.

RESOURCES

Razer AIKit: razer.ai/aikit | github.com/razerofficial/aikit

Akash Network: akash.network

AkashML: akashml.com

Akash Homenode: homenode.akash.network